Some properties like a temperature may have both positive and negative values (unless expressed in Kelvin), but a gas concentration can never go below zero. Yet, there are instruments reporting sub-zero concentrations. This may look like an instrument error at a first glance, but as long as the reading is close to the detection limit, this is not an error but a sign of a properly working instrument.

Actual and Measured Values

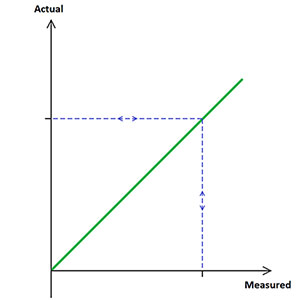

In an ideal situation, the true physical property of a parameter, the “actual value”, and the value by which we represent that property through a measurement, the “measured value” or the “reading”, are identical:

However, there is almost always a difference between the actual value and the reading. The difference owes to uncertainties in the measurement process. Several factors can affect the uncertainty, such as fundamental measurement principle, tolerances in the production of the measurement device, and the precision by which the value can be read.

Uncertainties

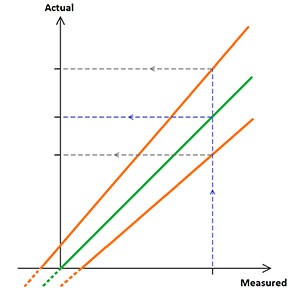

In complex measurement systems, there will be a lot of individual factors contributing to the total uncertainty. Some of these factors give rise to a fixed measurement uncertainty no matter of reading, some are proportional to the reading. Combining all uncertainties, it can look like this (green curve = ideal response, range within orange curves = uncertainty):

The fixed part of the uncertainty can be small compared to the proportional part, and vice versa. It depends on what is measured and the measurement method. Nevertheless, even if the actual value cannot go below zero, the measured value might do so.

Readings Around Zero

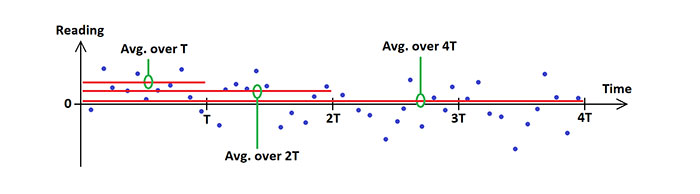

Let’s look at the case where the actual value is zero, and the instrument measuring the actual value has some built-in noise and drift around zero – many instruments behave like this. Measured over time, it is then just as probable that an individual reading is slightly above zero as it is slightly below zero, but it is rarely exactly zero.

However, if we average the readings over time, we will get closer and closer to the true zero value the more individual readings we include in the average. An average over time “T” is more likely close to the actual value than a random individual reading. Likewise, an average over twice that time (“2T”) is more representative than that over T, and an average over 4T is closer to the truth than that over 2T. It may look like this:

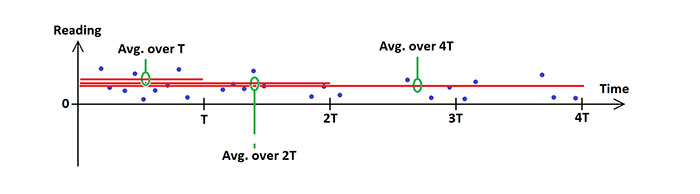

But what if we decide to ignore all negative readings? It’s tempting since the actual value cannot go below zero. The result would be like this:

This looks almost the same as the previous graph, but watch the distances between the x-axis and the averages – they increase. Removing the individual negative readings result in increased averages, moving them away from the actual value.

Conclusion: rejecting individual readings below zero (and by the way also if we just would force them to zero) is not automatically a good idea. It can result in a poorer estimation of an actual value close to zero.

With that said…

This is a very simplified introduction to why negative readings do not have to mean that an instrument is bad, but on the contrary are necessary to form the best possible estimation of the actual value. However, as in many other cases, it gets more complex if you start looking at all situations that might occur. For example, there can for sure be negative readings that are not a result of noise but of actual problems with an instrument.

So, which negative readings are good, and which are bad? The answer to that requires consideration of matters like “probability distribution”, “confidence interval”, “significance”, and “detection limit”, and some knowledge about mathematics and statistics to grasp it all. It can actually get quite complex. However, a knowledgeable instrument supplier will be able to guide you through all these aspects of measurement uncertainties and data validation. Please contact an OPSIS representative if you wish to have further insight in this topic when it comes to OPSIS monitoring solutions.

Om författaren

Bengt Löfstedt

Operative Support, OPSIS AB